This is the story of how Octane Security’s AI won the Monad audit competition, placing first among over 1,600 security researchers in one of the largest blockchain contests ever.

How AI Won the Monad Audit Contest

This is the story of how Octane Security’s AI won the Monad audit competition, placing first among over 1,600 security researchers in one of the largest blockchain contests ever.

Monad is an EVM-compatible Layer 1 built for high throughput, targeting around 10,000 TPS with roughly 0.4s block times and sub-second finality.

To stress test the now open-source client, Monad ran a 27-day Code4rena audit contest from September 15 to October 12, 2025, with $500,000 on offer – the largest-ever unconditional prize pool.

Late Entry, Fast Sprint

At the time, we were building an experimental language-agnostic version of Octane’s security analysis engine, in parallel with some other work. The Monad audit contest fell into the “try it if we can” bucket, but it was not our top priority.

It was only with around a week to go that we had a language agnostic version we could test on the Monad codebase. We used this final week of the contest as best as we could to prepare a single analysis, triage the most promising findings from it, prepare the PoCs and reports, and then submit.

We delivered our submissions in the last few days of the contest.

But on October 9, 2025, Code4rena’s public contest repo updated scope boundaries tied to third-party audit reports. In particular, issues identified in Spearbit and Zellic reports became out of scope for submissions received after specific timestamps on October 9. We only learned about this on the 11th, when it was announced on Discord.

From our side, this created immediate uncertainty: we could very conceivably spend another couple of days grinding, only to have parts of the surface area invalidated ex post facto.

We stopped any further work on the contest at that point, assuming the additional expected value was not worth the effort. Luckily, only one of our findings (a high-severity) was invalidated by the third-party report overlap.

What AI Actually Did Here (and What It Didn’t)

We get a lot of questions about how our AI works.

Broadly speaking, Octane produces reports that are not intended for contests, meaning that severity, what’s considered exploitable or in-scope, and whether a finding is even worth submitting require human judgement. Additionally, reports need to be written differently for contests versus end users of our application. Also, PoCs for the code in question need to be created separately, which for infrastructure codebases can be an especially time consuming task. That’s what Octane doesn’t do.

What Octane does do is ingest a codebase, build working context across it, and generate findings: specific vulnerabilities with supporting reasoning and references to the relevant code. And it does all this fast and thoroughly enough to cover a surface area a human would need weeks or months to cover. So in this case, every finding submitted to the contest was found by AI but confirmed by a human researcher before submission.

The loop looks like this:

- Octane produces findings

- The reviewer uses local tools (typically agentic or traditional IDEs) to understand the findings (because you can’t brute force comprehension of a huge unfamiliar repo on an extremely tight deadline) and select the most promising ones as candidates for submitting to the contest

- The reviewer builds a PoC that proves the vulnerability as required by the rules.

- Finally, the reviewer uses the original finding and their understanding of the contest rules to draft a report for submission

Very roughly, from the findings whose impacts seemed most relevant for the contest, half were invalidated statically before we invested in PoC work. Then another half of the resulting PoCs died before becoming final submissions.

Working With 160,000 LOC

The Monad scope was huge: roughly 160k line of code split across Rust and C++.

Human-only review is not well suited to such large codebases, especially when time is as limited as it is during audit contests. Even the best security researchers will be forced to end up sampling portions of the codebase.

AI’s structural advantage here allows it to sweep, re-sweep, and continue to keep sweeping for bugs, with zero fatigue (just more tokens) and without needing to feel oriented before it becomes useful. It just gets straight to work, ingesting a huge amount of context in seconds.

There were some challenges, though. Both our human reviewers and LLMs struggled more with the C++ side of things. This was probably due to difficulties with determining which code was being used under what circumstances in the specific repo. That ambiguity led to a number of false positives as well as some potentially true positives that were hard to validate within the timeframe of the contest.

The Rust codebase was easier to analyze and validate, and the majority of our findings were there.

What We’d Change Next Time

We essentially did just one analysis run, and we only spent one week working with the codebase, not the full 27 day contest window.

With more time, we would have explored more surface area, iterated on analysis focus, and, frankly, gotten less lost in the huge and unfamiliar codebase.

We also made some deliberate tradeoffs. We did not focus on RPC layer denial-of-service style issues because they’re often lower severity, expensive to prove cleanly, and discounted in practice in competitive settings. Many valid mediums were in that area, and even though Octane had found a lot of such issues, we took a calculated risk and focused our limited triage and PoC time on the more severe and easier to prove impacts.

So Did AI Win?

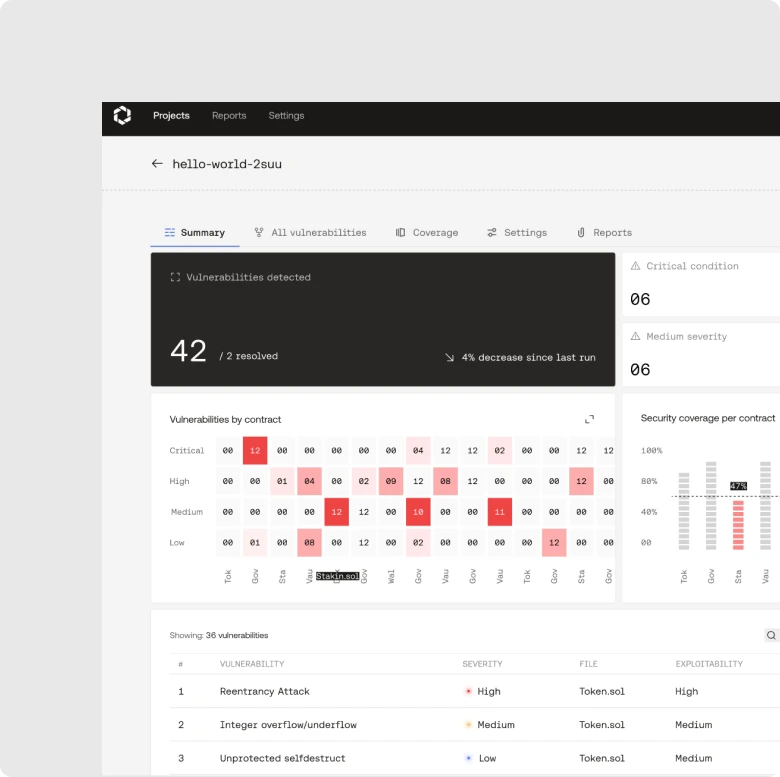

The contest had a $500,000 award pool, the largest ever unconditional pool of its kind, and it ultimately paid out three high and seven medium severity findings.

Three of those high severity findings were ours, and we placed #1 overall, taking home one-third of the prize pool.

So yes, AI won, in the sense that the decisive edge came from machine-scale discovery combined with human verification.

But it’s also true that competitive audits contain a certain degree of randomness: overlapping public disclosures, judge interpretations, scope edges,timing, and a good deal of chance and luck.

The more important takeaway is why exactly AI benefitted from a structural advantage here:

- Novel systems tend to have novel bugs

- Newly written code has more bugs than mature code

- Huge line count makes manual coverage difficult

- Non-Solidity infra code has a smaller pool of deeply experienced researchers

Why This Matters Outside Contests

The best part of the Monad audit contest for us wasn’t the leaderboard (though it is nice to see oct0pwn right at the top); it was the proof that a language agnostic engine can generate credible findings in Rust and C++ while a human spends their time where it provides the most value: validation, exploitability, and clear reporting.

That’s the same loop we offer teams who integrate Octane into their CICD:

- AI does the broad, repeated, context-aware vulnerability discovery

- Humans do the validation and remediation

The human-in-the-loop is either a developer – in the case of CICD integrations for end-user teams – or in-house security researchers – in the case of audit contests and custom reviews.

Ultimately, recent developments in code production and cybersecurity mean if you’re building anything that can’t afford to fail, that division of labor is the only scalable security posture.

Schedule a demo to get the same security analysis that won the Monad contest in your repo today.